This is a must-watch video from the Allen Institute for AI for anyone seriously interested in artificial intelligence. It’s 70 minutes long, but worth it. Some of the highlights from my perspective are:

- 27:27 where the key reason that deep learning approaches fail at understanding language are discussed

- 31:30 where the inability of inductive approaches to address logical quantification or variables are discussed

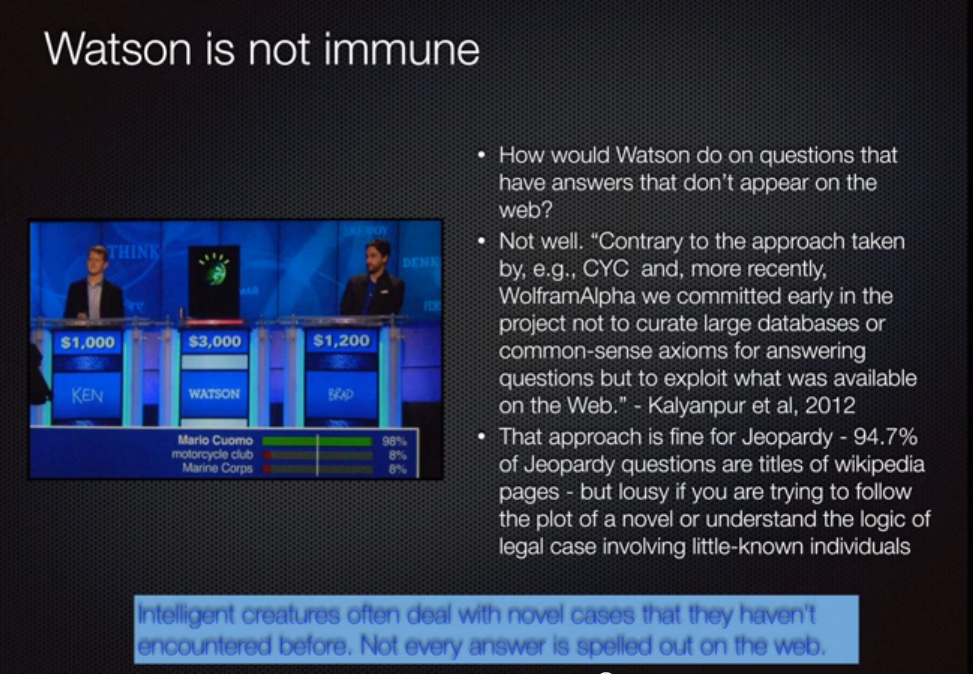

- 39:30 thru 42+ where the inability of deep learning to perform as well as Watson and the inability of Watson to understand or reason are discussed

The astute viewer and blog reader will recognize this slide as discussed by Oren Etzioni here.

Ian Ayres, the author of Super Crunchers, gave a keynote at Fair Isaac’s Interact conference in San Francisco this morning. He made a number of interesting points related to his thesis that intuitive decision making is doomed. I found his points on random trials much more interesting, however.

Ian Ayres, the author of Super Crunchers, gave a keynote at Fair Isaac’s Interact conference in San Francisco this morning. He made a number of interesting points related to his thesis that intuitive decision making is doomed. I found his points on random trials much more interesting, however.