Thanks to John Sowa‘s comment on LinkedIn for this link which, although slightly dated, contains the following:

In August, I had the chance to speak with Peter Norvig, Director of Google Research, and asked him if he thought that techniques like deep learning could ever solve complicated tasks that are more characteristic of human intelligence, like understanding stories, which is something Norvig used to work on in the nineteen-eighties. Back then, Norvig had written a brilliant review of the previous work on getting machines to understand stories, and fully endorsed an approach that built on classical “symbol-manipulation” techniques. Norvig’s group is now working within Hinton, and Norvig is clearly very interested in seeing what Hinton could come up with. But even Norvig didn’t see how you could build a machine that could understand stories using deep learning alone.

Other quotes along the same lines come from Oren Etzioni in Looking to the Future of Data Science:

- But in his keynote speech on Monday, Oren Etzioni, a prominent computer scientist and chief executive of the recently created Allen Institute for Artificial Intelligence, delivered a call to arms to the assembled data mavens. Don’t be overly influenced, Mr. Etzioni warned, by the “big data tidal wave,” with its emphasis on mining large data sets for correlations, inferences and predictions. The big data approach, he said during his talk and in an interview later, is brimming with short-term commercial opportunity, but he said scientists should set their sights further. “It might be fine if you want to target ads and generate product recommendations,” he said, “but it’s not common sense knowledge.”

- The “big” in big data tends to get all the attention, Mr. Etzioni said, but thorny problems often reside in a seemingly simple sentence or two. He showed the sentence: “The large ball crashed right through the table because it was made of Styrofoam.” He asked, What was made of Styrofoam? The large ball? Or the table? The table, humans will invariably answer. But the question is a conundrum for a software program, Mr. Etzioni explained

- Instead, at the Allen Institute, financed by Microsoft co-founder Paul Allen, Mr. Etzioni is leading a growing team of 30 researchers that is working on systems that move from data to knowledge to theories, and then can reason. The test, he said, is: “Does it combine things it knows to draw conclusions?” This is the step from correlation, probabilities and prediction to a computer system that can understand

This is a significant statement from one of the best people in fact extraction on the planet!

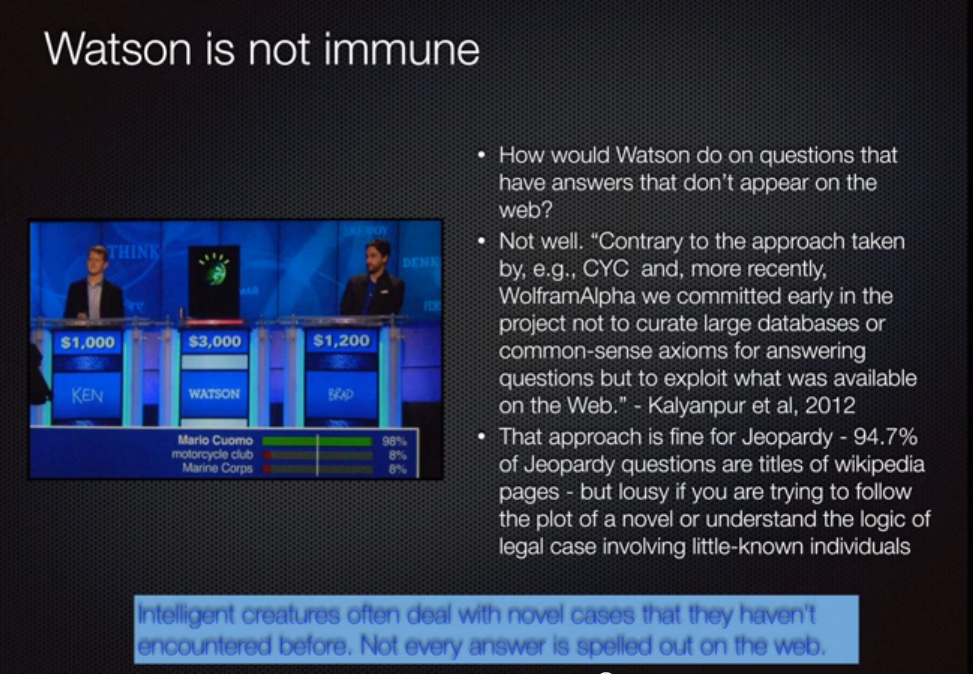

As you know from elsewhere on this blog, I’ve been involved with the precursor to the AIAI (Vulcan’s Project Halo) and am a fan of Watson. But Watson is the best example of what Big Data, Deep Learning, fact extraction, and textual entailment aren’t even close to:

- During a Final Jeopardy! segment that included the “U.S. Cities” category, the clue was: “Its largest airport was named for a World War II hero; its second-largest, for a World War II battle.”

- Watson responded “What is Toronto???,” while contestants Jennings and Rutter correctly answered Chicago — for the city’s O’Hare and Midway airports.

Sure, you can rationalize these things and hope that someday the machine will not need reliable knowledge (or that it will induce enough information with enough certainty). IBM does a lot of this (e.g., see the source of the quotes above). That day may come, but it will happen a lot sooner with curated knowledge.