The Winograd Challenge is an alternative to the Turing Test for assessing artificial intelligence. The essence of the test involves resolving pronouns. To date, systems have not fared well on the test for several reasons. There are 3 that come to mind:

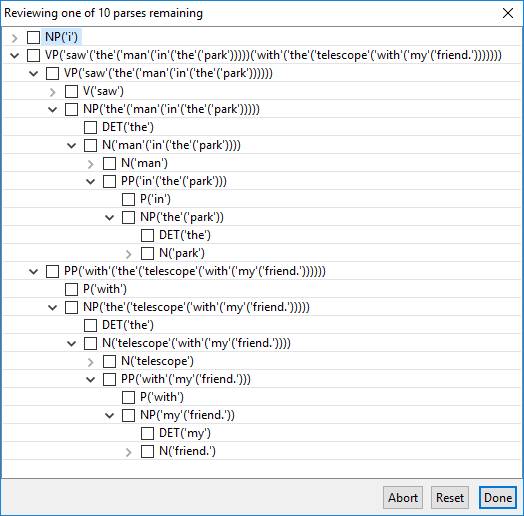

- The natural language processing involved in the word problems is beyond the state of the art.

- Resolving many of the pronouns requires more common sense knowledge than state of the art systems possess.

- Resolving many of the problems requires pragmatic reasoning beyond the state of the art.

As an example, one of the simpler exemplary problems is:

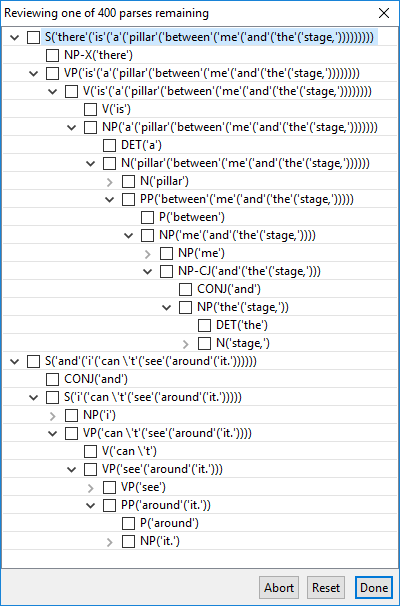

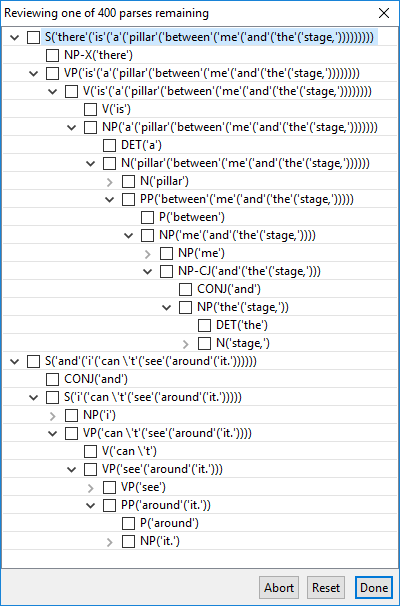

- There is a pillar between me and the stage, and I can’t see around it.

A heuristic system (or a deep learning one) could infer that “it” does not refer to “me” or “I” and toss a coin between “pillar” and “stage”. A system worthy of the passing the Winograd Challenge should “know” it’s the pillar.

Even this simple sentence presents some NLP challenges that are easy to overlook. For example, does “between” modify the pillar or the verb “is”?

This is not much of a challenge, however, so let’s touch on some deeper issues and a more challenging problem…

Continue reading “Parsing Winograd Challenges”