Many users land up wanting to import sentences in the Linguist rather than type or paste them in one at a time. One way to do this is to right click on a group within the Linguist and import a file of sentences, one per line, into that group. But if you want to import a large document and retain its outline structure, some application-specific use of the Linguist APIs may be the way to go.

Is business logic too much for classical logic?

Business logic is not limited to mathematical logic, as in first-order predicate calculus.

Business logic commonly requires “aggregation” over sets of things, like summing the value of claims against a property to subtract it from the value of that property in order to determine the equity of the owner of that property.

- The equity of the owner of a property in the property is the excess of the value of the property over the value of claims against it.

There are various ways of describing such extended forms of classical logic. The most relevant to most enterprises is the relational algebra perspective, which is the base for relational databases and SQL. Another is the notion of generalized quantifiers.

In either case, it is a practical matter to be able to capture such logic in a rigorous manner. The example below shows how that can be accomplished using English, producing the following axiom in extended logic:

- ∀(?x15)(property(?x15)→∀(?x10)(owner(of)(?x10,?x15)→∃(?x31)(value(of(?x15))(?x31)∧∑(?x44)(∀(?x49)(claim(against(?x15))(?x49)→value(of(?x49))(?x44))→∃(?x26)(excess(of(?x31))(over(?x44))(?x26)∧equity(in(?x15))(of(?x10))(?x26))))))

This logic can be realized in various ways, depending on the deployment platform, such as: Continue reading “Is business logic too much for classical logic?”

Simply Smarter Intelligent Agents

Deep learning can produce some impressive chatbots, but they are hardly intelligent. In fact, they are precisely ignorant in that they do not think or know anything.

More intelligent dialog with an artificially intelligent agent involves both knowledge and thinking. In this article, we educate an intelligent agent that reasons to answer questions.

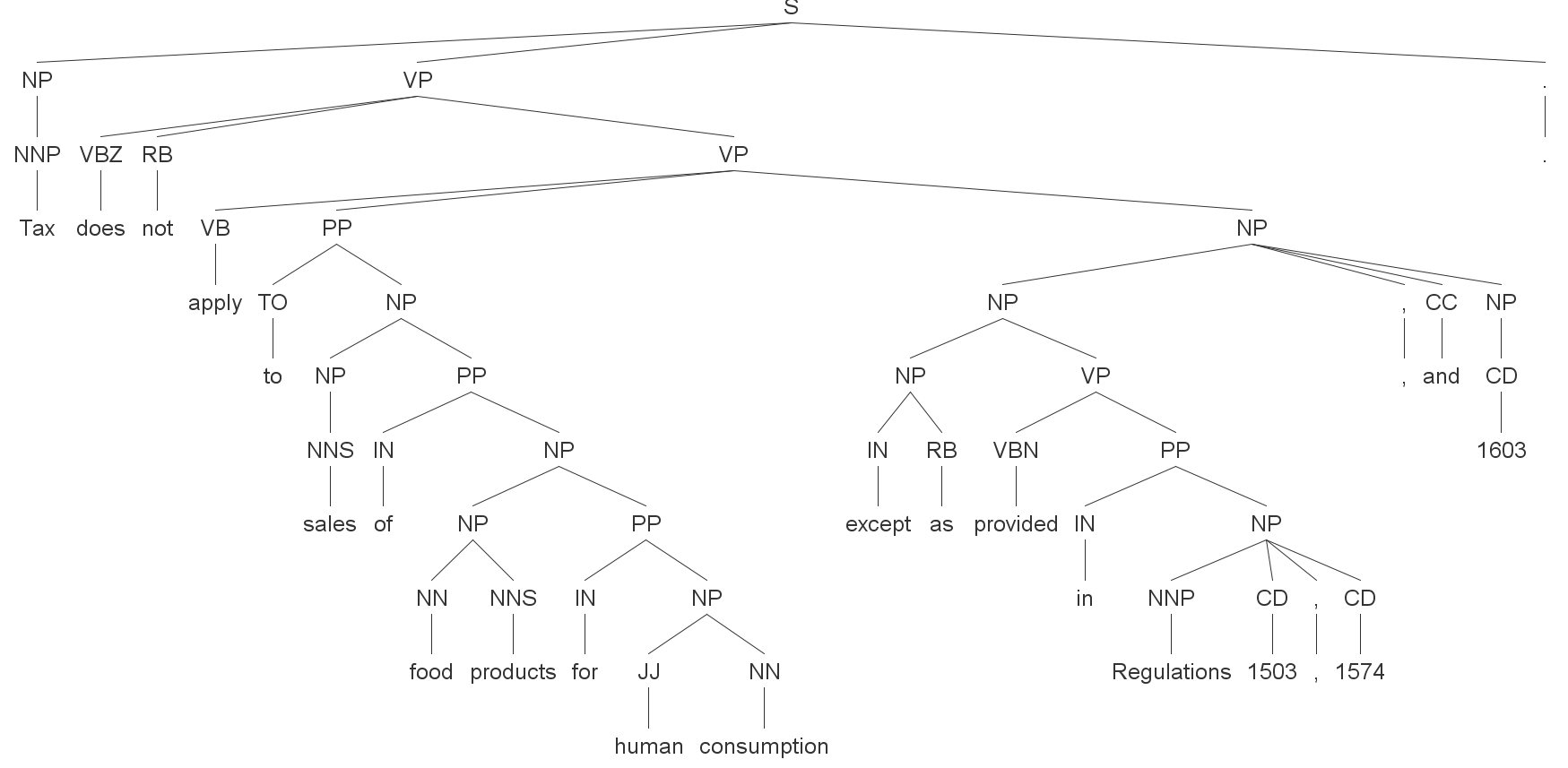

Problems with Probabilistic Parsing

We are using statistical techniques to increase the automation of logical and semantic disambiguation, but nothing is easy with natural language.

Here is the Stanford Parser (the probabilistic context-free grammar version) applied to a couple of sentences. There is nothing wrong with the Stanford Parser! It’s state of the art and worthy of respect for what it does well.

Confessions of a production rule vendor (part 2)

Going on 5 years ago, I wrote part 1. Now, finally, it’s time for the rest of the story.

Continue reading “Confessions of a production rule vendor (part 2)”

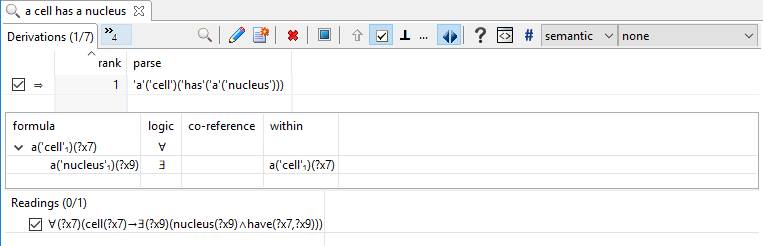

Simply Logical English

This is not all that simple of an article, but it walks you through, from start to finish, how we get from English to logic. In particular, it shows how English sentences can be directly translated into formal logic for use with in automated reasoning with theorem provers, logic programs as simple as Prolog, and even into production rule systems.

There is a section in the middle that is a bit technical about the relationship between full logic and more limited systems (e.g., Prolog or production rule systems). You don’t have to appreciate the details, but we include them to avoid the impression of hand-waving

The examples here are trivial. You can find many and more complex examples throughout Automata’s web site.

Consider the sentence, “A cell has a nucleus.”:

Natural Intelligence

Deep natural language understanding (NLU) is different than deep learning, as is deep reasoning. Deep learning facilities deep NLP and will facilitate deeper reasoning, but it’s deep NLP for knowledge acquisition and question answering that seems most critical for general AI. If that’s the case, we might call such general AI, “natural intelligence”.

Deep learning on its own delivers only the most shallow reasoning and embarrasses itself due to its lack of “common sense” (or any knowledge at all, for that matter!). DARPA, the Allen Institute, and deep learning experts have come to their senses about the limits of deep learning with regard to general AI.

General artificial intelligence requires all of it: deep natural language understanding[1], deep learning, and deep reasoning. The deep aspects are critical but no more so than knowledge (including “common sense”).[2] Continue reading “Natural Intelligence”

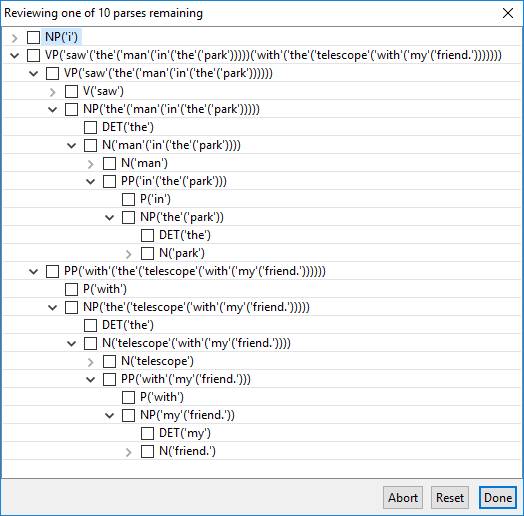

Iterative Disambiguation

In a prior post we showed how extraordinarily ambiguous, long sentences can be precisely interpreted. Here we take a simpler look upon request.

Let’s take a sentence that has more than 10 parses and configure the software to disambiguate among no more than 10.

Once again, this is a trivial sentence to disambiguate in seconds without iterative parsing!

The immediate results might present:

Suppose the intent is not that the telescope is with my friend, so veto “telescope with my friend” with a right-click.

“Only full page color ads can run on the back cover of the New York Times Magazine.”

A decade or so ago, we were debating how to educate Paul Allen’s artificial intelligence in a meeting at Vulcan headquarters in Seattle with researchers from IBM, Cycorp, SRI, and other places.

We were talking about how to “engineer knowledge” from textbooks into formal systems like Cyc or Vulcan’s SILK inference engine (which we were developing at the time). Although some progress had been made in prior years, the onus of acquiring knowledge using SRI’s Aura remained too high and the reasoning capabilities that resulted from Aura, which targeted University of Texas’ Knowledge Machine, were too limited to achieve Paul’s objective of a Digital Aristotle. Unfortunately, this failure ultimately led to the end of Project Halo and the beginning of the Aristo project under Oren Etzioni’s leadership at the Allen Institute for Artificial Intelligence.

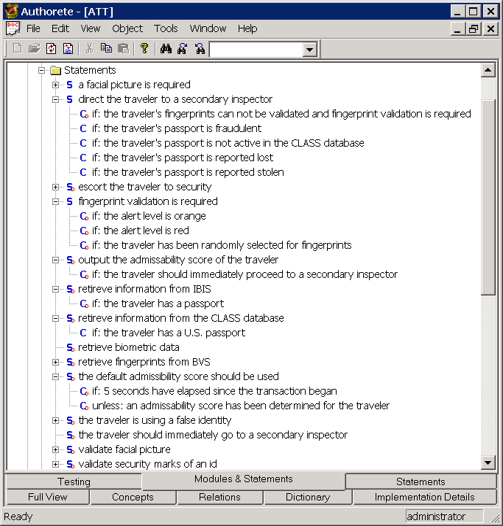

At that meeting, I brought up the idea of simply translating English into logic, as my former product called “Authorete” did. (We renamed it before Haley Systems was acquired by Oracle, prior to the meeting.)