Going on 5 years ago, I wrote part 1. Now, finally, it’s time for the rest of the story.

Continue reading “Confessions of a production rule vendor (part 2)”

systems that know and understand and think and learn

Going on 5 years ago, I wrote part 1. Now, finally, it’s time for the rest of the story.

Continue reading “Confessions of a production rule vendor (part 2)”

A decade or so ago, we were debating how to educate Paul Allen’s artificial intelligence in a meeting at Vulcan headquarters in Seattle with researchers from IBM, Cycorp, SRI, and other places.

We were talking about how to “engineer knowledge” from textbooks into formal systems like Cyc or Vulcan’s SILK inference engine (which we were developing at the time). Although some progress had been made in prior years, the onus of acquiring knowledge using SRI’s Aura remained too high and the reasoning capabilities that resulted from Aura, which targeted University of Texas’ Knowledge Machine, were too limited to achieve Paul’s objective of a Digital Aristotle. Unfortunately, this failure ultimately led to the end of Project Halo and the beginning of the Aristo project under Oren Etzioni’s leadership at the Allen Institute for Artificial Intelligence.

At that meeting, I brought up the idea of simply translating English into logic, as my former product called “Authorete” did. (We renamed it before Haley Systems was acquired by Oracle, prior to the meeting.)

Here is a graphic on how various reasoning technologies fit the practical requirements for reasoning discussed below:

This proved surprisingly controversial during correspondence with colleagues from the Vulcan work on SILK and its evolution at http://www.coherentknowledge.com.

The requirements that motivated this were the following: Continue reading “Requirements for Logical Reasoning”

In preparation for generating RIF and SBVR from the Linguist, we have produced an OWL ontology for the pertinent aspects of the SBVR specification. We hope that this is helpful to others and would sincerely appreciate any corrections or comments on how to improve it.

Paul

Our efforts at acquiring deep knowledge from a college biology text have enabled us to answer a number of questions that are beyond what has been previously demonstrated.

For example, we’re answering questions like:

A couple of these are at higher levels on the Bloom scale of cognitive skills than Watson can reach (which is significantly higher than search engines).

As some other posts have shown in images, we can translate completely natural sentences into formal logic. We actually do the reasoning using Vulcan’s SILK, which has great capabilities, including defeasibility. We can also output to RIF or SBVR, but the temporal aspects and various things such as modality and the need for defeasibility favor SILK or Cyc for the best reasoning and QA performance.

One thing in particular is worth noting: this approach does better with causality and temporal logic than is typically considered by most controlled natural language systems, whether they are translating to a business rules engine or a logic formalism, such as first order or description logic. The approach promises better application development and knowledge management capabilities for more of the business process management and complex event processing markets.

We’re working with the English Resource Grammar (ERG), OWL, and Vulcan’s SILK to educate the machine by translating textbooks into defeasible logic. Part of this involves an ontology that models semantics more deeply than the ERG, which is based on head-driven phrase structure grammar (HPSG), which provides deeper parsing and, with the ERG and the DELPH-IN infrastructure, also provides a simple under-specified semantic representation called minimal recursion semantics (MRS).

We’re having a great time using OWL to clarify and enrich the semantics of the rich model underlying the ERG. Here’s an example, FYI. If you’d like to know more (or help), please drop us a line! Overall the project will demonstrate our capabilities for transforming everyday sentences into RIF and business rule languages using SBVR extended with defeasibility and other capabilities, all modeled in the same OWL ontology.

What triggered this blog entry was a bit of a surprise in seeing that whether or not an adjective could be used depictively is sometimes encoded in the lexicon. This is one of the problems of TDL versus a description-logic based model with more expressiveness. It results in more lexical entries than necessary, which has been discussed by others when contrasted with the attributed logic engine (ALE), for example.

In trying to model the semantics of words like ‘same’ and ‘different’, we are scratching our heads about these lines from the ERG’s lexicon:

One of the interesting things about lexicalized grammars is that lexical entries (i.e., ‘words’) are described with almost arbitrary combinations of their lexical, syntactic, and semantic characteristics.

The preceding code is expressed in a type description language (TDL) used by the Lisp-based LKB (and its C++ counterpart, PET, which are unification-based parsers that produce a chart of plausible parses with some efficiency. What is given above is already deeper than what you can expect from a statistical parser (but richer descriptions of lexical entries promises to make statistical parsing much better, too).

Unfortunately, there is no available documentation on why the ERG was designed as it is, so the meaning of the above is difficult to interpret. For example, the types of lexical entries (the symbols ending in ‘_le’) referenced above are defined as follows:

Needless to say, that’s a mouthful! Chasing this down, the following ‘informs’ us that “the same”, which uses type #2 above, is defined using the following lexical types:

Which is to say that it is non-conjunctive, complements a head to form a phrase, can’t be affixed, cannot constitute a main clause, and is a word.

The fact that the lexical entry for “the same” is adjectival is given the definition of the following type(s) used in the SYNSEM feature:

Which is to say that it is a comparative or superlative adjectival word (even though it consists of two lexemes in its ‘orthography’) that involves two semantic arguments including one complement which may be unexpressed prepositional phrase. A comparative or superlative adjective, in turn, is non-conjunctive, complements a head to form a phrase, is non-affix bearing (?), and non-clausal, as defined by the type ‘nonc-hm-nab’ above.

The types used in the syntax and semantic (i.e., SYNSEM) feature of the two lexical types are defined as follows (none of which is documented):

In a moment, we’ll discuss the types used in the second of these, but first, some basics on the semantics that are mixed with the syntax above.

In effect, the above indicates that a new ‘elementary predication’ will be needed in the MRS to represent the adjectival relationship in the logic derived in the course of parsing (i.e., that’s what ‘unsp_ind’ means, although it’s not documented, which I will try not to bemoan much further.)

The following indicates that the newly formed elementary predicate is not (initially) within any scope and that it has two arguments whose semantics (i.e., their RELations) are concatenated for propagation into the list of elementary predications that will constitute the MRS for any parses found.

The last of these expresses that the constuction is lexical rather than phrasal (which includes clausal in the ERG).

Continuing with the definition of “the same” as an adjective, the following finally clarifies what it means to be a basic adjective:

Well, ‘clarifies’ might not have been the right word! Essentially, it indicates that the adjective may have an optional degree specifier (which semantically modifies the predicate of the adjective) and that the predicate specified in the lexical entry becomes the predicate used in the MRS. The rest is defined below:

Which ends with a bunch of basic setup types except for constraining for relation for an adjective to be ‘basically adjectivally’ on the first two lines. Also on these first two lines, it specifies that its subject and its specifier, if any, must be completed (i.e., empty) and agree with its non-conjunctive argument (which is not to say that it cannot be conjunctive, but that it modifies the conjunction as a whole, if so.) Whether or not it is expressed will determine if there are any further predicates about its arguments or if its unexpressed argument is identified by an otherwise unreferenced variable in any resulting MRS.

The lexical grounding of this type specification is given below, indicating that it may (or not) have phonology (e.g., pronunciation, such as whether its onset is voiced) and if and how and with what punctuation it may appear, if any. In general, a semantic argument may be lexical or phrasal and optional but if it appears it corresponds to some semantic index (think variable) in sort of predicate in any resulting MRS. (The *_min types do not constrain the values of their features any further).

Note that an adjective is not possess-able and that it modifies something nominal (or a title) and that if it has a specifier that it is a quantifier or degree (e.g., ‘very’). Again, an adjective cannot function as a main clause or be conjunctive (in and of itself).

Finally, if you look far above you will see that the basic semantics of an adjective with an additional semantic argument is ‘intersective’, as in:

Here, the length 0 difference list and the following definitions indicate that intersective semantics do not accept anything but local modification:

AVM stands for ‘attribute value matrix’, which is the structure by which types and their features are defined (with nesting and unification constraints using # to indicate equality).

By now you’re probably getting the idea that there is fairly significant model of the English language, including its lexical and syntactic aspects, but if you look there is a lot about semantics here, too.

The slides for my Business Rules Forum presentation on event semantics and focusing on events in order to simplify process definition and to facilitate more robust governance and compliance are at Event-centric BPM.

After the talk I spoke with Jan Verbeek and Gartjan Grijzen of Be Informed and reviewed their software, which is excellent. They have been quite successful with various government agencies in applying the event-centric methodology to produce goal-driven processing. Their approach is elegant and effective. It clearly demonstrates the merits of an event-centric approach and the power that emerges from understanding event-dependencies. Also, it is very semantic, ontological, and logic-programming oriented in its approach (e.g., they use OWL and a backward-chaining inference engine).

They do not have the top-down knowledge management approach that I advocate nor do they provide the logical verification of governing policies and compliance (i.e., using theorem provers) that I mention in the talk (see Guido Governatori‘s 2010 publications and Travis Breaux‘s research at CMU, for example) but theirs is the best commercially deployed work in separating business process description from procedural implementation that comes to mind. (Note that Ed Barkmeyer of NIST reports some use of SBVR descriptions of manufacturing processes with theorem provers. Some in automotive and aerospace industries have been interested in this approach for quality purposes, too.)

BeInformed is now expanding into the United States with the assistance of Mills Davis and others. Their software is definitely worth consideration and, in my opinion, is more elegant and effective than the generic BPMN approach.

If you are considering the use of any of the following business rules management systems (BRMS):

you can learn a lot by carefully examining this video on decisions using scoring in Ilog. (The video is also worth considering with respect to Corticon since it authors and renders conditions, actions, and if-then rules within a table format.)

This article is a detailed walk through that stands completely independently of the video (I recommend skipping the first 50 seconds and watching for 3 minutes or so). You will find detailed commentary and insights here, sometimes fairly critical but in places complimentary. JRules is a mature and successful product. (This is not to say to a CIO that it is an appropriate or low risk alternative, however. I would hold on that assessment pending an understanding of strategy.)

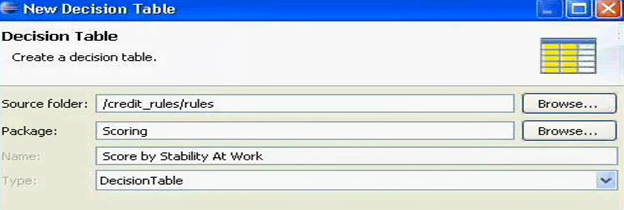

The video starts by creating a decision table using this dialog:

Note that the decision reached by the resulting table is labeled but not defined, nor is the information needed to consult the table specified. As it turns out, this table will take an action rather than make a decision. As we will see it will “set the score of result to a number”. As we will also see, it references an application. Given an application, it follows references to related concepts, such as borrowers (which it errantly considers synonomous with applicants), concerning which it further pursues employment information.

Continue reading “IBM Ilog JRules for business modeling and rule authoring”

Today, I came upon some commentary by a business rule colleague, Carlos Serranos-Morales, of Fair Isaac concerning a presentation I made at the Business Rules Forum. During the presentation I showed some sentences that are beyond the current state of the art in the business rules industry. Generally speaking, these were logical statements that did not use the word “if”. (Note, however, that many of the them could be expressed in SBVR, OMG’s semantics of business vocabulary and rules standard). Carlos argued that such statements should be more precisely articulated within the specific context of a business process.

Here is the slide that triggered the controversy:

Ron Ross was kind enough to send me a copy of his recently publishd 3rd edition of his book, Business Rule Concepts. Ron has been at the forefront of mainstreaming business rule capture for decades. Personally, I am most fond of his leadership in establishing the Object Management Group’s Semantics of Business Vocabulary and Rules standard (OMG’s SBVR). This book is an indispensible backgrounder and introduction to the concepts necessary to effectively manage business rules using this standard.