Consider the following disambiguation result from a user of Automata’s Linguist™.

Continue reading “Properly disambiguating a sentence using the Linguist™”

systems that know and understand and think and learn

Consider the following disambiguation result from a user of Automata’s Linguist™.

Continue reading “Properly disambiguating a sentence using the Linguist™”

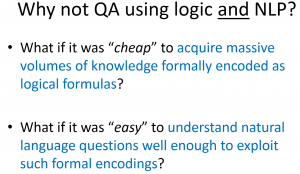

This is a must-watch video from the Allen Institute for AI for anyone seriously interested in artificial intelligence. It’s 70 minutes long, but worth it. Some of the highlights from my perspective are:

The astute viewer and blog reader will recognize this slide as discussed by Oren Etzioni here.

Here is a graphic on how various reasoning technologies fit the practical requirements for reasoning discussed below:

This proved surprisingly controversial during correspondence with colleagues from the Vulcan work on SILK and its evolution at http://www.coherentknowledge.com.

The requirements that motivated this were the following: Continue reading “Requirements for Logical Reasoning”

We’re collaborating on some educational work and came across this sentence in a textbook on finance and accounting:

We use statistical NLP but assist with the ambiguities. In doing this, we relate questions and answers and explanations to the text.

We also extract the terminology and produce a rich lexicalized ontology of the subject matter for pedagogical uses, assessment, and adaptive learning.

Here’s one that just struck me as interesting. This is a case where the choice looks like it won’t matter much either way, but …

We are collaborating in the acquisition of knowledge concerning clinical trials. Initially, we are looking at trials related to pancreatic cancer, such as A Study Using 18F-FAZA and PET Scans to Study Hypoxia in Pancreatic Cancer.

At http://clinicaltrials.gov, each trial is rendered as HTML for browsing from underlying XML files which can be downloaded. Although we can parse the underlying XML into content for knowledge acquisition automatically, this article looks at acquiring the knowledge about an individual trial using the web presentation. In particular, we look at the logical, semantic, and linguistic issues of understanding eligibility criteria. Continue reading “Helping people find clinical trials for which they are eligible”

Recently, John Sowa has commented on LinkedIn or in correspondence with some of us at Coherent Knowledge Systems on the old adage due to Shanks concerning the Neats. vs. the Scruffies. The Neats want nice formal logics as the basis of artificial intelligence. This includes anyone who prefers classical logic (e.g., Common Logic, RIF-BLD, or SBVR) or standard ontologies (e.g., OWL-DL) for representing knowledge and reasoning with it. The Scruffies may use well-defined technology, but are not constrained by it. They’ll do whatever they think works, now, whether or not it is a good long term solution and despite its shortcomings, as long as it can obtain immediate objectives.

Watson is scruffy. It doesn’t try to understand or formally represent knowledge. It combines a lot of effective technologies into an evidentiary framework that allows it to effectively “guess”.

Today, in response to continued discussion in the Natural Language Processing group on LinkedIn under the topic “This is Watson”, I’m posting the following presentation on Project Sherlock and the Linguist vs. Google and IBM.

Essentially, the neat approach is more viable today than ever. So, chalk one up for the neats, including Dr. Sowa and Menno Mofait’s comment in that discussion.

During a presentation at CMU after winning the game show,, IBM admitted that in order to get the last leg of improvement needed to win Jeopardy!, they needed to do some “neat” ontological knowledge acquisition, too!

If you are using one of the more popular rules engines, chances are you can blame me. I popularized the technology of forward-chaining production rules based on the Rete Algorithm. Others have certainly contributed; my path is the one that led to open-source implementations and many commercial products, including those of IBM, Oracle, SAP, TIBCO, Red Hat, and too many others to mention (e.g., see this).

Today, I want to make clear that the future prospects for production rule technology are diminishing. My objective here is to explain why most rule-based technologies are no good and why some are much better. Although production rule technology is much better than most rule-based technologies, I hope to also make clear that in the age of IBM’s Watson, Google’s Brain, and the semantic web, production rule technology is inadequate.

Rules have become so pervasive in the software business that vendors of all types of software say they have them. Consider, for example, that even Microsoft Outlook has rules!

Continue reading “Confessions of a production rule vendor (part 1)”

At the SemTech conference last week, a few companies asked me how to respond to IBM’s Watson given my involvement with rapid knowledge acquisition for deep question answering at Vulcan. My answer varies with whether there is any subject matter focus, but essentially involves extending their approach with deeper knowledge and more emphasis on logical in additional to textual entailment.

Today, in a discussion on the LinkedIn NLP group, there was some interest in finding more technical details about Watson. A year ago, IBM published the most technical details to date about Watson in the IBM Journal of Research and Development. Most of those journal articles are available for free on the web. For convenience, here are my bookmarks to them.

Continue reading “Deep question answering: Watson vs. Aristotle”

Continue reading “Deep question answering: Watson vs. Aristotle”Last week, I attended the FIBO (Financial Business Industry Ontology) Technology Summit along with 60 others.

The effort is building an ontology of fundamental concepts in the financial services. As part of the effort, there is surprisingly clear understanding that for the resulting representation to be useful, there is a need for logical and rule-based functionality that does not fit within OWL (the web ontology language standard) or SWRL (a simple semantic web rule language). In discussing how to meet the reasoning and information processing needs of consumers of FIBO, there was surprisingly rapid agreement that the functionality of Flora-2 was most promising for use in defining and exemplifying the use of the emerging standard. Endorsers including Benjamin Grosof and myself, along with a team from SRI International. Others had a number of excellent questions, such as concerning open- vs. closed-world semantics, which are addressed by support for the well-founded semantics in Flora-2 and XSB.

Thanks go to Vulcan for making the improvements to Flora and XSB that have been developed in Project Halo available to all!

As noted in prior posts about Project Sherlock, we have acquired knowledge from a biology textbook to build the business case for applications like Inquire. We reported our results at SemTech recently. The slides are available here.