As I mentioned in this post, we’re having fun layering questions and answers with explanations on top of electronic textbook content.

The basic idea is to couple a graph structure of questions, answers, and explanations into the text using semantics. The trick is to do that well and automatically enough that we can deliver effective adaptive learning support. This is analogous to the knowledge graph that users of Knewton‘s API create for their content. The difference is that we get the graph from the content, including the “assessment items” (that’s what educators call questions, among other things). Essentially, we parse the content, including the assessment items (i.e., the questions and each of their answers and explanations). The result of this parsing is, as we’ve described elsewhere, precise lexical, syntactic, semantic, and logic understanding of each sentence in the content. But we don’t have to go nearly that far to exceed the state of the art here.

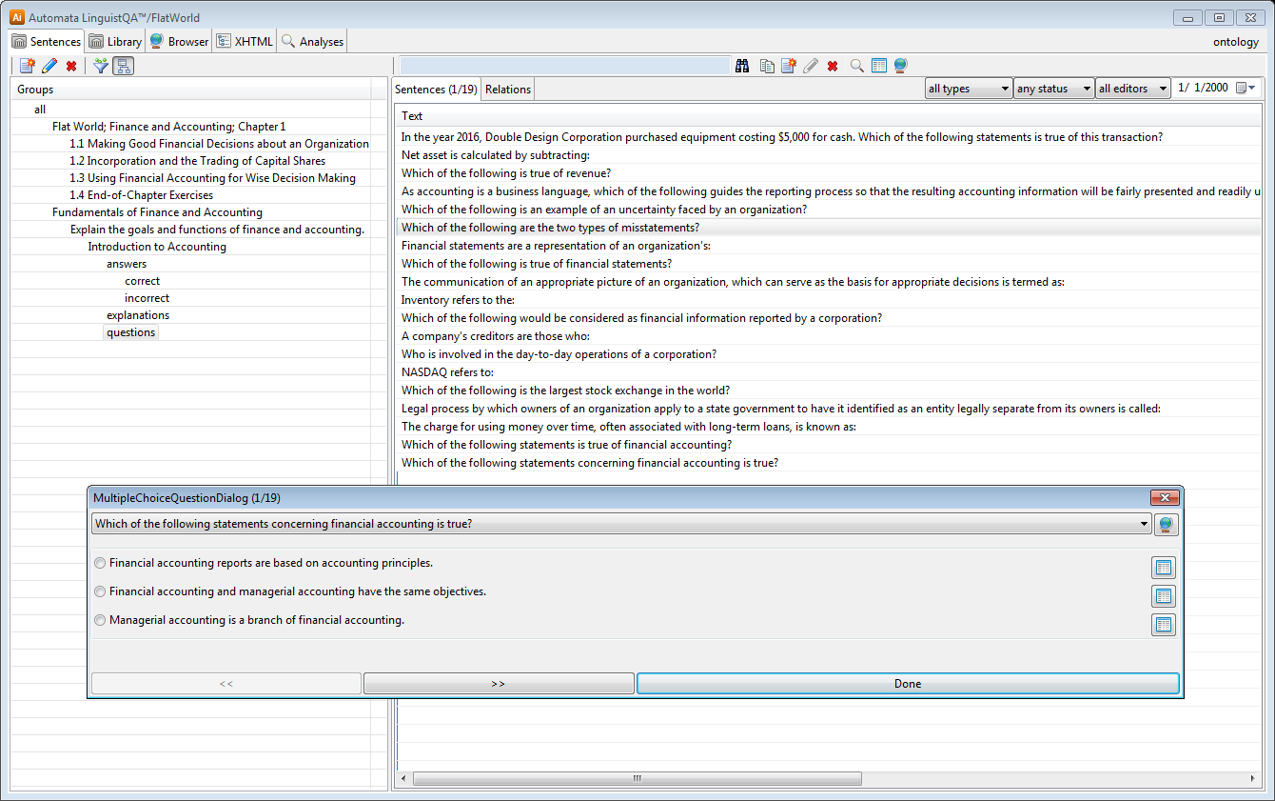

We automatically import the text, break it into sentences organized with respect to the document structure (i.e., in an outline of groups, per chapter, section, …), and parse them. We keep track of the ambiguities in the normal workflow of collaborative curation supported by the Linguist™ but proceed with the best parse according to statistical NLP. The result of this is a set of logical terms using normalized vocabulary. The automated parsing cannot go as far as to produce a logical formula, but that is more than we need (we’re not actually trying to answer the questions, as we were in Project Sherlock!)!

Across all the sentences, including the questions and explanations of answers, we have a graph of terms and inter-term relationships that supports algorithms such as inter-sentence comparison and contrasting. Think of this graph as a semantic network (e.g., one represented in RDF). This semantic network is further enriched by a semantic web ontology (e.g., expressed in OWL) which is semi-automatically derived from the content. For example, when we see a term like “accounting information”, we know it is information and we automatically acquire that net assets and total assets are both assets. We also automatically acquire that equipment is purchased by corporations, that net assets are calculated, that a reporting process is a process modified by a gerund derived from the verb report, and a lot more.

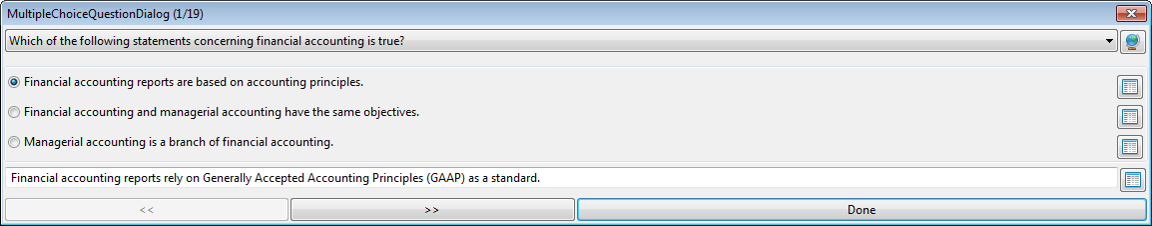

Well, I’m drifting off the thought that had me start this post, so I’ll just show you a picture for now and move on below:

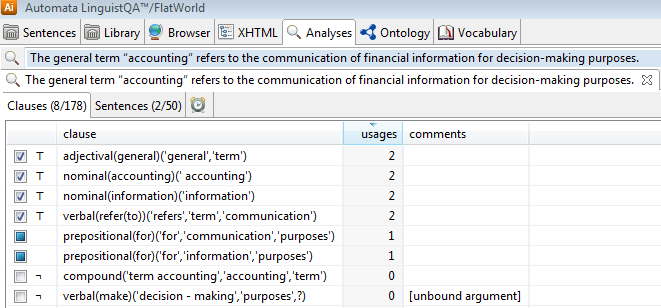

Personally, I’m interested in the difficulties that collaborating contributors sometimes face in disambiguating language. In looking at the automated parse results for some of these sentences, I saw the following residual:

How would you choose? Is the communication or the information for the purpose of making decisions? Does it matter?

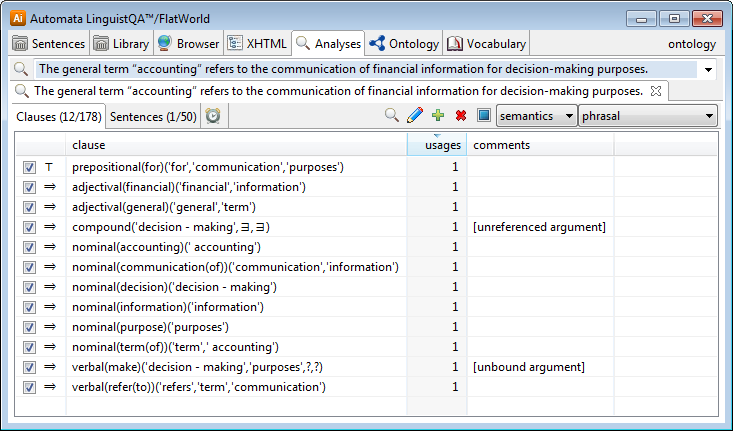

The following shows some of the results for one choice:

Note: there is a bug in the renderings of the existentials in the live code I am working with (i.e., don’t worry about it, but the words make and decision should be there).

This gives you an idea of the semantic information that we can acquire automatically and which can be curated to an arbitrary level of precision where appropriate.

Bottom line: the approach holds great promise for curation of assessment items and learning objects into a collaboratively developed adaptive learning platform.

One Reply to “Automatic Knowledge Graphs for Assessment Items and Learning Objects”

Comments are closed.